Multi-view stereo for community photo collections

IEEE International Conference on Computer Vision (ICCV) 2007.

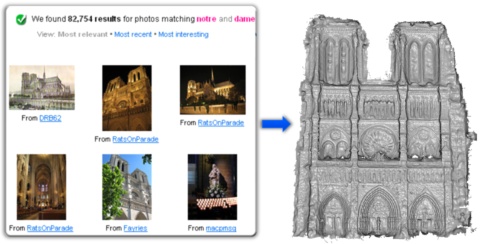

Detailed 3D models reconstructed from crawled Internet images.

Abstract:

We present a multi-view stereo algorithm that addresses the extreme changes in lighting, scale, clutter,

and other effects in large online community photo collections. Our idea is to intelligently choose images

to match, both at a per-view and per-pixel level. We show that such adaptive view selection enables robust

performance even with dramatic appearance variability. The stereo matching technique takes as input sparse

3D points reconstructed from structure-from-motion methods and iteratively grows surfaces from these

points. Optimizing for surface normals within a photoconsistency measure significantly improves the

matching results. While the focus of our approach is to estimate high-quality depth maps, we also show

examples of merging the resulting depth maps into compelling scene reconstructions. We demonstrate our

algorithm on standard multi-view stereo datasets and on casually acquired photo collections of famous

scenes gathered from the Internet.

Hindsights:

One application may be to provide useful 3D impostors to improve view interpolation for image-based

rendering systems like Photosynth.

See content copyrights.