Detailed Publications

|

Sandwiched compression: Repurposing standard codecs with neural network wrappers.

arXiv, 2024.

Improved image and video compression using neural pre- and post-processing.

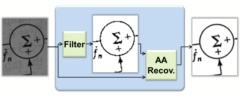

We propose sandwiching standard image and video codecs between pre- and post-processing neural networks.

The networks are jointly trained through a differentiable codec proxy to minimize a given rate-distortion

loss. This sandwich architecture not only improves the standard codec's performance on its intended

content, it can effectively adapt the codec to other types of image/video content and to other distortion

measures. Essentially, the sandwich learns to transmit “neural code images” that optimize

overall rate-distortion performance even when the overall problem is well outside the scope of the codec's

design. Through a variety of examples, we apply the sandwich architecture to sources with different

numbers of channels, higher resolution, higher dynamic range, and perceptual distortion measures. The

results demonstrate substantial improvements (up to 9 dB gains or up to 30% bitrate reductions) compared to

alternative adaptations. We derive VQ equivalents for the sandwich, establish optimality properties, and

design differentiable codec proxies approximating current standard codecs. We further analyze model

complexity, visual quality under perceptual metrics, as well as sandwich configurations that offer

interesting potentials in image/video compression and streaming.

No hindsights yet.

|

|

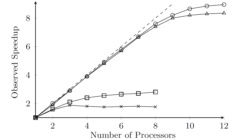

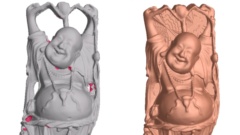

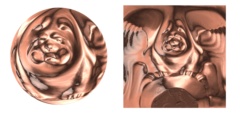

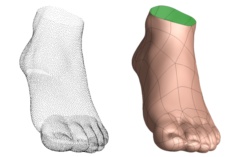

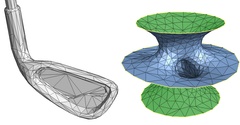

Distributed Poisson surface reconstruction.

Computer Graphics Forum, 42(6), 2023.

Fast slab-based parallel Poisson reconstruction on a compute cluster.

Screened Poisson surface reconstruction robustly creates meshes from oriented point sets. For large

datasets, the technique requires hours of computation and significant memory. We present a method to

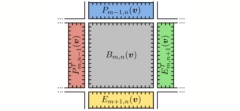

parallelize and distribute this computation over multiple commodity client nodes. The method partitions

space on one axis into adaptively sized slabs containing balanced subsets of points. Because the Poisson

formulation involves a global system, the challenge is to maintain seamless consistency at the slab

boundaries and obtain a reconstruction that is indistinguishable from the serial result. To this end, we

express the reconstructed indicator function as a sum of a low-resolution term computed on a server and

high-resolution terms computed on distributed clients. Using a client–server architecture, we map the

computation onto a sequence of serial server tasks and parallel client tasks, separated by synchronization

barriers. This architecture also enables low-memory evaluation on a single computer, albeit without

speedup. We demonstrate a 700 million vertex reconstruction of the billion point David statue scan in less

than 20 min on a 65-node cluster with a maximum memory usage of 45 GB/node, or in 14 h on a single node.

No hindsights yet.

|

|

Sandwiched image compression: Increasing the resolution and dynamic range of standard codecs.

Picture Coding Symposium (PCS) 2022. (Best Paper Finalist.)

Neural pre- and post-processing for compressing higher-resolution and HDR images.

Given a standard image codec, we compress images that may have higher resolution and/or higher bit depth

than allowed in the codec's specifications, by sandwiching the standard codec between a neural

pre-processor (before the standard encoder) and a neural post-processor (after the standard decoder).

Using a differentiable proxy for the standard codec, we design the neural pre- and post-processors to

transport the high resolution (super-resolution, SR) or high bit depth (high dynamic range, HDR) images as

lower resolution and lower bit depth images. The neural processors accomplish this with spatially coded

modulation, which acts as watermarks to preserve the important image detail during compression.

Experiments show that compared to conventional methods of transmitting high resolution or high bit depth

through lower resolution or lower bit depth codecs, our sandwich architecture gains ~9 dB for SR images and

~3 dB for HDR images at the same rate over large test sets. We also observe significant gains in visual

quality.

No hindsights yet.

|

|

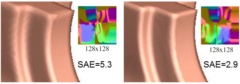

Sandwiched image compression: Wrapping neural networks around a standard codec.

IEEE International Conference on Image Processing (ICIP) 2021.

Improved image compression using neural pre- and post-processing.

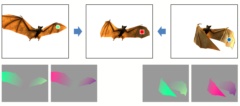

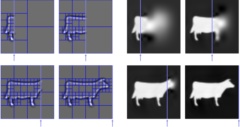

We sandwich a standard image codec between two neural networks: a preprocessor that outputs neural codes,

and a postprocessor that reconstructs the image. The neural codes are compressed as ordinary images by the

standard codec. Using differentiable proxies for both rate and distortion, we develop a rate-distortion

optimization framework that trains the networks to generate neural codes that are efficiently compressible

as images. This architecture not only improves rate-distortion performance for ordinary RGB images, but

also enables efficient compression of alternative image types (such as normal maps of computer graphics)

using standard image codecs. Results demonstrate the effectiveness and flexibility of neural processing in

mapping a variety of input data modalities to the rigid structure of standard codecs. A surprising result

is that the rate-distortion-optimized neural processing seamlessly learns to transport color images using a

single-channel (grayscale) codec.

No hindsights yet.

|

|

Project Starline: A high-fidelity telepresence system.

ACM Trans. Graphics (SIGGRAPH Asia), 40(6), 2021.

Chat with a remote person as if they were copresent.

We present a real-time bidirectional communication system that lets two people, separated by distance,

experience a face-to-face conversation as if they were copresent. It is the first telepresence system that

is demonstrably better than 2D videoconferencing, as measured using participant ratings (e.g., presence,

attentiveness, reaction-gauging, engagement), meeting recall, and observed nonverbal behaviors (e.g., head

nods, eyebrow movements). This milestone is reached by maximizing audiovisual fidelity and the sense of

copresence in all design elements, including physical layout, lighting, face tracking, multi-view capture,

microphone array, multi-stream compression, loudspeaker output, and lenticular display. Our system achieves

key 3D audiovisual cues (stereopsis, motion parallax, and spatialized audio) and enables the full range of

communication cues (eye contact, hand gestures, and body language), yet does not require special glasses or

body-worn microphones/headphones. The system consists of a head-tracked autostereoscopic display,

high-resolution 3D capture and rendering subsystems, and network transmission using compressed color and

depth video streams. Other contributions include a novel image-based geometry fusion algorithm, free-space

dereverberation, and talker localization.

My contributions focused on the real-time compression and rendering technologies.

Related patent publications include

Spatially adaptive video compression for multiple streams of color and depth and

Image-based geometric fusion of multiple depth images using ray casting.

|

|

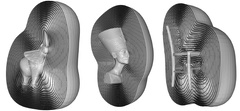

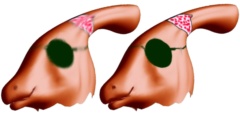

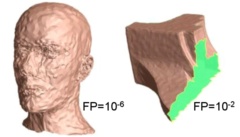

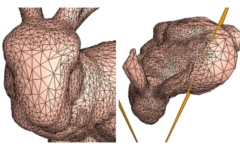

Poisson surface reconstruction with envelope constraints.

Symposium on Geometry Processing 2020.

Improved reconstruction using Dirichlet constraints on visual hull or depth hull.

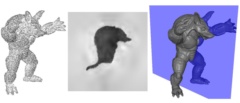

Reconstructing surfaces from scanned 3D points has been an important research area for several decades.

One common approach that has proven efficient and robust to noise is implicit surface reconstruction,

i.e. fitting to the points a 3D scalar function (such as an indicator function or signed-distance field)

and then extracting an isosurface. Though many techniques fall within this category, existing methods

either impose no boundary constraints or impose Dirichlet/Neumann conditions on the surface of a bounding

box containing the scanned data.

In this work, we demonstrate the benefit of supporting Dirichlet constraints on a general boundary. To this end, we adapt the Screened Poisson Reconstruction algorithm to input a constraint envelope in addition to the oriented point cloud. We impose Dirichlet boundary conditions, forcing the reconstructed implicit function to be zero outside this constraint surface. Using a visual hull and/or depth hull derived from RGB-D scans to define the constraint envelope, we obtain substantially improved surface reconstructions in regions of missing data.

No hindsights yet.

|

|

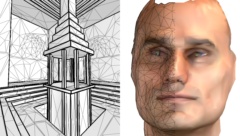

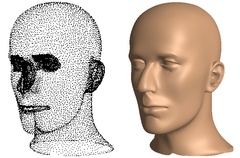

An adaptive multigrid solver for applications in computer graphics.

Computer Graphics Forum, 38(1), 2019.

General-purpose adaptive finite-elements multigrid solver in arbitrary dimension.

A key processing step in numerous computer graphics applications is the solution of a linear system

discretized over a spatial domain. Often, the linear system can be represented using an adaptive domain

tessellation, either because the solution will only be sampled sparsely, or because the solution is known to be

“interesting” (e.g. high-frequency) only in localized regions. In this work, we propose an adaptive,

finite-elements, multigrid solver capable of efficiently solving such linear systems. Our solver is

designed to be general-purpose, supporting finite-elements of different degrees, across different

dimensions, and supporting both integrated and pointwise constraints. We demonstrate the efficacy of our

solver in applications including surface reconstruction, image stitching, and Euclidean Distance Transform

calculation.

No hindsights yet.

|

|

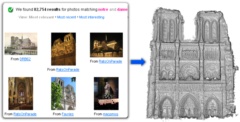

Neural rerendering in the wild.

CVPR 2019 (oral).

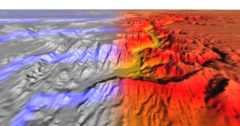

Learn views under varying appearance from internet photos and reconstructed points.

We explore total scene capture — recording, modeling, and rerendering a scene under varying

appearance such as season and time of day. Starting from internet photos of a tourist landmark, we apply

traditional 3D reconstruction to register the photos and approximate the scene as a point cloud. For each

photo, we render the scene points into a deep framebuffer, and train a neural network to learn the mapping

of these initial renderings to the actual photos. This rerendering network also takes as input a latent

appearance vector and a semantic mask indicating the location of transient objects like pedestrians. The

model is evaluated on several datasets of publicly available images spanning a broad range of illumination

conditions. We create short videos demonstrating realistic manipulation of the image viewpoint, appearance,

and semantic labeling. We also compare results with prior work on scene reconstruction from internet

photos.

No hindsights yet.

|

|

Montage4D: Interactive seamless fusion of multiview video textures.

Symposium on Interactive 3D Graphics and Games (I3D), 2018.

Temporally coherent seamless stitching of multi-view textures.

The commoditization of virtual and augmented reality devices and the availability of inexpensive consumer

depth cameras have catalyzed a resurgence of interest in spatiotemporal performance capture. Recent

systems like Fusion4D and Holoportation address several crucial problems in the real-time fusion of

multiview depth maps into volumetric and deformable representations. Nonetheless, stitching multiview

video textures onto dynamic meshes remains challenging due to imprecise geometries, occlusion seams, and

critical time constraints. In this paper, we present a practical solution towards real-time seamless

texture montage for dynamic multiview reconstruction. We build on the ideas of dilated depth

discontinuities and majority voting from Holoportation to reduce ghosting effects when blending textures.

In contrast to their approach, we determine the appropriate blend of textures per vertex using

view-dependent rendering techniques, so as to avert fuzziness caused by the ubiquitous normal-weighted

blending. By leveraging geodesics-guided diffusion and temporal texture fields, our algorithm mitigates

spatial occlusion seams while preserving temporal consistency. Experiments demonstrate significant

enhancement in rendering quality, especially in detailed regions such as faces. We envision a wide range

of applications for Montage4D, including immersive telepresence for business, training, and live

entertainment.

No hindsights yet.

|

|

Gradient-domain processing within a texture atlas.

ACM Trans. Graphics (SIGGRAPH) 37(4), 2018.

Fast processing of surface signals directly in texture domain, avoiding resampling.

Processing signals on surfaces often involves resampling the signal over the vertices of a dense mesh and

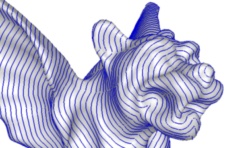

applying mesh-based filtering operators. We present a framework to process a signal directly in a texture

atlas domain. The benefits are twofold: avoiding resampling degradation and exploiting the regularity of

the texture image grid. The main challenges are to preserve continuity across atlas chart boundaries and

to adapt differential operators to the non-uniform parameterization. We introduce a novel function space

and multigrid solver that jointly enable robust, interactive, and geometry-aware signal processing. We

demonstrate our approach using several applications including smoothing and sharpening, multiview

stitching, geodesic distance computation, and line integral convolution.

No hindsights yet.

|

|

Gigapixel panorama video loops.

ACM Trans. Graphics, 37(1), 2018. (Presented at SIGGRAPH 2018.)

Spatiotemporally consistent looping panorama merged from 2D grid of videos.

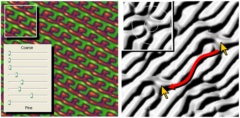

We present the first technique to create wide-angle, high-resolution looping panoramic videos. Starting

with a 2D grid of registered videos acquired on a robotic mount, we formulate a combinatorial optimization

to determine for each output pixel the source video and looping parameters that jointly maximize

spatiotemporal consistency. This optimization is accelerated by reducing the set of source labels using a

graph-coloring scheme. We parallelize the computation and implement it out-of-core by partitioning the

domain along low-importance paths. The merged panorama is assembled using gradient-domain blending and

stored as a hierarchy of video tiles. Finally, an interactive viewer adaptively preloads these tiles for

responsive browsing and allows the user to interactively edit and improve local regions. We demonstrate

these techniques on gigapixel-sized looping panoramas.

No hindsights yet.

|

|

Spatiotemporal atlas parameterization for evolving meshes.

ACM Trans. Graphics (SIGGRAPH) 36(4), 2017.

Tracked mesh with sparse incremental changes in connectivity and texture map.

We convert a sequence of unstructured textured meshes into a mesh with incrementally changing

connectivity and atlas parameterization.

Like prior work on surface tracking, we seek temporally coherent mesh connectivity to enable efficient

representation of surface geometry and texture.

Like recent work on evolving meshes,

we pursue local remeshing to permit tracking over long

sequences containing significant deformations or topological changes.

Our main contribution is to show that both goals are realizable within a common framework

that simultaneously evolves both the set of mesh triangles and the parametric map.

Sparsifying the remeshing operations allows the formation of large spatiotemporal texture charts.

These charts are packed as prisms into a 3D atlas for a texture video.

Reducing tracking drift using mesh-based optical flow helps improve compression of the resulting video stream.

No hindsights yet.

|

|

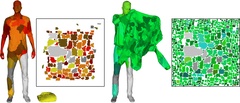

Motion graphs for unstructured textured meshes.

ACM Trans. Graphics (SIGGRAPH), 35(4), 2016.

Automatic smooth transitions between similar meshes in a scanned sequence.

Scanned performances are commonly represented in virtual environments

as sequences of textured triangle meshes.

Detailed shapes deforming over time benefit from

meshes with dynamically evolving connectivity.

We analyze these unstructured mesh sequences to automatically synthesize

motion graphs with new smooth transitions between compatible poses and actions.

Such motion graphs enable natural periodic motions,

stochastic playback, and user-directed animations.

The main challenge of unstructured sequences

is that the meshes differ not only in connectivity

but also in alignment, shape, and texture.

We introduce new geometry processing techniques to address these problems

and demonstrate visually seamless transitions on high-quality captures.

Although most of our data is captured at 30 frames/second, we find that it is easy to interpolate the

geometry (in the GPU) to the native display rate (e.g. 90 fps) for the best experience in a virtual

environment.

|

|

New controls for combining images in correspondence.

IEEE Trans. Vis. Comput. Graphics, 22(7), 2016. (Presented at I3D 2016.)

Multiscale edge-aware melding of geometry and shape from two images.

When interpolating images, for instance in the context of morphing, there are myriad approaches for

defining correspondence maps that align structurally similar elements. However, the actual interpolation

usually involves simple functions for both geometric paths and color blending. In this paper we explore

new types of controls for combining two images related by a correspondence map. Our insight is to apply

recent edge-aware decomposition techniques, not just to the image content but to the map itself. Our

framework establishes an intuitive low-dimensional parameter space for merging the shape and color from the

two source images at both low and high frequencies. A gallery-based user interface enables interactive

traversal of this rich space, to either define a morph path or synthesize new hybrid images. Extrapolation

of the shape parameters achieves compelling effects. Finally we demonstrate an extension of the framework

to videos.

No hindsights yet.

|

|

Fast computation of seamless video loops.

ACM Trans. Graphics (SIGGRAPH Asia), 34(6), 2015.

High-quality looping video generated in nearly real-time.

Short looping videos concisely capture the dynamism of natural scenes. Creating seamless loops usually

involves maximizing spatiotemporal consistency and applying Poisson blending. We take an end-to-end view

of the problem and present new techniques that jointly improve loop quality while also significantly

reducing processing time. A key idea is to relax the consistency constraints to anticipate the subsequent

blending, thereby enabling looping of low-frequency content like moving clouds and changing illumination.

We also analyze the input video to remove an undesired bias toward short loops. The quality gains are

demonstrated visually and confirmed quantitatively using a new gradient-domain consistency metric. We

improve system performance by classifying potentially loopable pixels, masking the 2D graph cut, pruning

graph-cut labels based on dominant periods, and optimizing on a coarse grid while retaining finer detail.

Together these techniques reduce computation times from tens of minutes to nearly real-time.

We adapted this video looping into the “Live Images” feature of the

Microsoft Pix Camera

iOS app

which shipped in July 2016.

|

|

High-quality streamable free-viewpoint video.

ACM Trans. Graphics (SIGGRAPH), 34(4), 2015.

Multimodal reconstruction of tracked textured meshes.

We present the first end-to-end solution to create high-quality free-viewpoint video encoded as a compact

data stream. Our system records performances using a dense set of RGB and IR video cameras, generates

dynamic textured surfaces, and compresses these to a streamable 3D video format. Four technical advances

contribute to high fidelity and robustness: multimodal multi-view stereo fusing RGB, IR, and silhouette

information; adaptive meshing guided by automatic detection of perceptually salient areas; mesh tracking to

create temporally coherent subsequences; and encoding of tracked textured meshes as an MPEG video

stream. Quantitative experiments demonstrate geometric accuracy, texture fidelity, and encoding

efficiency. We release several datasets with calibrated inputs and processed results to foster future

research.

Letting mesh connectivity change temporally allows capture of more general scenes,

but makes it more difficult to create transitions between different motions

and to create an efficient texture atlas;

we address these problems in the subsequent papers

Motion graphs for unstructured textured meshes and

Spatiotemporal atlas parameterization for evolving meshes.

|

|

Semi-automated video morphing.

Eurographics Symposium on Rendering, 2014.

Transition across two videos using optimized spatiotemporal alignment.

We explore creating smooth transitions between videos of different scenes. As in traditional image

morphing, good spatial correspondence is crucial to prevent ghosting, especially at silhouettes. Video

morphing presents added challenges. Because motions are often unsynchronized, temporal alignment is also

necessary. Applying morphing to individual frames leads to discontinuities, so temporal coherence must be

considered. Our approach is to optimize a full spatiotemporal mapping between the two videos. We reduce

tedious interaction by letting the optimization derive the fine-scale map given only sparse user-specified

constraints. For robustness, the optimization objective examines structural similarity of the video

content. We demonstrate the approach on a variety of videos, obtaining results using few explicit

correspondences.

No hindsights yet.

|

|

Automating image morphing using structural similarity on a halfway domain.

ACM Trans. Graphics, 33(5), 2014. (Presented at SIGGRAPH 2014.)

Fast optimization to align intricate shapes using little interactive guidance.

The main challenge in achieving good image morphs is to create a map that aligns corresponding image

elements. Our aim is to help automate this often tedious task. We compute the map by optimizing the

compatibility of corresponding warped image neighborhoods using an adaptation of structural similarity.

The optimization is regularized by a thin-plate spline, and may be guided by a few user-drawn points. We

parameterize the map over a halfway domain and show that this representation offers many benefits. The map

is able to treat the image pair symmetrically, model simple occlusions continuously, span partially

overlapping images, and define extrapolated correspondences. Moreover, it enables direct evaluation of the

morph in a pixel shader without mesh rasterization. We improve the morphs by optimizing quadratic motion

paths and by seamlessly extending content beyond the image boundaries. We parallelize the algorithm on a

GPU to achieve a responsive interface and demonstrate challenging morphs obtained with little effort.

One exciting aspect is that just as in the earlier project

Image-space bidirectional scene reprojection,

the iterative implicit solver is able to evaluate the morph color directly in a pixel shader,

without requiring any geometric tessellation, i.e. using only “gather” operations.

|

|

A fresh look at generalized sampling.

Foundations and Trends in Computer Graphics and Vision, 8(1), 2014.

Extension of recent signal-processing techniques to graphics filtering.

Discretization and reconstruction are fundamental operations in computer graphics, enabling the conversion

between sampled and continuous representations. Major advances in signal-processing research have shown

that these operations can often be performed more efficiently by decomposing a filter into two parts: a

compactly supported continuous-domain function and a digital filter. This strategy of “generalized

sampling” has appeared in a few graphics papers, but is largely unexplored in our community. This

survey broadly summarizes the key aspects of the framework, and delves into specific applications in

graphics. Using new notation, we concisely present and extend several key techniques. In addition, we

demonstrate benefits for prefiltering in image downscaling and supersample-based rendering, and analyze the

effect that generalized sampling has on the noise due to Monte Carlo estimation. We conclude with a

qualitative and quantitative comparison of traditional and generalized filters.

An earlier version of this paper is

IMPA Tech. Report E022/2011 & Microsoft Research MSR-TR-2011-16, February 2011

.

|

|

Automated video looping with progressive dynamism.

ACM Trans. Graphics (SIGGRAPH), 32(4), 2013.

Representation for seamlessly looping video with controllable level of dynamism.

Given a short video we create a representation that captures a spectrum of looping videos with varying

levels of dynamism, ranging from a static image to a highly animated loop. In such a progressively dynamic

video, scene liveliness can be adjusted interactively using a slider control. Applications include

background images and slideshows, where the desired level of activity may depend on personal taste or mood.

The representation also provides a segmentation of the scene into independently looping regions, enabling

interactive local adjustment over dynamism. For a landscape scene, this control might correspond to

selective animation and deanimation of grass motion, water ripples, and swaying trees. Converting

arbitrary video to looping content is a challenging research problem. Unlike prior work, we explore an

optimization in which each pixel automatically determines its own looping period. The resulting nested

segmentation of static and dynamic scene regions forms an extremely compact representation.

The time-mapping equation (1) has a simpler form:

ϕ(x, t) = sx +

((t - sx)

mod px).

(One must be careful that the C/C++

remainder

operator “%” differs from the

modulo

operator “mod”

for negative numbers.)

Thanks to Mark Finch for preparing and optimizing the code in our demo tool release. See also our more recent work on improving loop quality and speeding up its computation. |

|

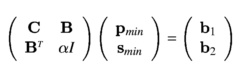

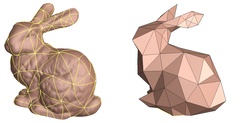

Screened Poisson surface reconstruction.

ACM Trans. Graphics, 32(3), 2013. (Presented at SIGGRAPH 2013.)

Improved geometric fidelity and linear-complexity adaptive hierarchical solver.

Poisson surface reconstruction creates watertight surfaces from oriented point sets. In this work we

extend the technique to explicitly incorporate the points as interpolation constraints. The extension can

be interpreted as a generalization of the underlying mathematical framework to a screened Poisson equation.

In contrast to other image and geometry processing techniques, the screening term is defined over a sparse

set of points rather than over the full domain. We show that these sparse constraints can nonetheless be

integrated efficiently. Because the modified linear system retains the same finite-element discretization,

the sparsity structure is unchanged, and the system can still be solved using a multigrid approach.

Moreover we present several algorithmic improvements that together reduce the time complexity of the solver

to linear in the number of points, thereby enabling faster, higher-quality surface reconstructions.

See also the comments in the earlier paper

Poisson surface reconstruction on which this builds.

|

|

Cliplets: Juxtaposing still and dynamic imagery.

Symposium on User Interface Software and Technology (UIST) 2012. (Best Paper Award.)

Cinemagraphs and more general spatiotemporal compositions from handheld video.

We explore creating cliplets, a form of visual media that juxtaposes still image and video segments, both

spatially and temporally, to expressively abstract a moment. Much as in cinemagraphs, the tension between

static and dynamic elements in a cliplet reinforces both aspects, strongly focusing the viewer's attention.

Creating this type of imagery is challenging without professional tools and training. We develop a set of

idioms, essentially spatiotemporal mappings, that characterize cliplet elements, and use these idioms in an

interactive system to quickly compose a cliplet from ordinary handheld video. One difficulty is to avoid

artifacts in the cliplet composition without resorting to extensive manual input. We address this with

automatic alignment, looping optimization and feathering, simultaneous matting and compositing, and

Laplacian blending. A key user-interface challenge is to provide affordances to define the parameters of

the mappings from input time to output time while maintaining a focus on the cliplet being created. We

demonstrate the creation of a variety of cliplet types. We also report on informal feedback as well as a

more structured survey of users.

With cliplets, the user interactively sketches static and dynamic regions, each assigned a uniform looping period. Our subsequent work Automated video looping animates the entire scene without user assistance, and uses combinatorial optimization to infer the optimal looping interval for each pixel. See some nice professionally created cinemagraphs at https://cinemagraphs.com/. |

|

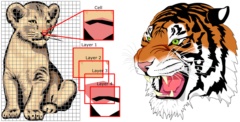

A subdivision-based representation for vector image editing.

IEEE Trans. Vis. Comput. Graphics, 18(11), Nov. 2012. (Presented at I3D 2013.)

(Spotlight Paper.) Piecewise-smooth subdivision for representing and manipulating images.

Vector graphics has been employed in a wide variety of applications due to its scalability and editability.

Editability is a high priority for artists and designers who wish to produce vector-based graphical content

with user interaction. In this paper, we introduce a new vector image representation based on piecewise

smooth subdivision surfaces, which is a simple, unified and flexible framework that supports a variety of

operations, including shape editing, color editing, image stylization, and vector image processing. These

operations effectively create novel vector graphics by reusing and altering existing image vectorization

results. Because image vectorization yields an abstraction of the original raster image, controlling the

level of detail of this abstraction is highly desirable. To this end, we design a feature-oriented vector

image pyramid that offers multiple levels of abstraction simultaneously. Our new vector image

representation can be rasterized efficiently using GPU-accelerated subdivision. Experiments indicate that

our vector image representation achieves high visual quality and better supports editing operations than

existing representations.

Using a common subdivision process to define both the geometric outlines and the (piecewise) smooth color

field is elegant.

|

|

Freeform vector graphics with controlled thin-plate splines.

ACM Trans. Graphics (SIGGRAPH Asia), 30(6), 2011.

Rich set of curve and point controls for intuitive and expressive color interpolation.

Recent work defines vector graphics using diffusion between colored curves. We explore higher-order

fairing to enable more natural interpolation and greater expressive control. Specifically, we build on

thin-plate splines which provide smoothness everywhere except at user-specified tears and creases

(discontinuities in value and derivative respectively). Our system lets a user sketch discontinuity curves

without fixing their colors, and sprinkle color constraints at sparse interior points to obtain smooth

interpolation subject to the outlines. We refine the representation with novel contour and slope curves,

which anisotropically constrain interpolation derivatives. Compound curves further increase editing power

by expanding a single curve into multiple offsets of various basic types (value, tear, crease, slope, and

contour). The vector constraints are discretized over an image grid, and satisfied using a hierarchical

solver. We demonstrate interactive authoring on a desktop CPU.

We should have mentioned the work of

[Xia et al. 2009. Patch-based image vectorization

with automatic curvilinear feature alignment] which also introduces higher-order color reconstruction

for vector graphics. It optimizes parametric Bezier patches with Thin-Plate Spline color functions to

approximate a given image.

|

|

GPU-efficient recursive filtering and summed-area tables.

ACM Trans. Graphics (SIGGRAPH Asia), 30(6), 2011.

Efficient overlapped computation of successive recursive filters on 2D images.

Image processing operations like blurring, inverse convolution, and summed-area tables are often computed

efficiently as a sequence of 1D recursive filters. While much research has explored parallel recursive

filtering, prior techniques do not optimize across the entire filter sequence. Typically, a separate

filter (or often a causal-anticausal filter pair) is required in each dimension. Computing these filter

passes independently results in significant traffic to global memory, creating a bottleneck in GPU systems.

We present a new algorithmic framework for parallel evaluation. It partitions the image into 2D blocks,

with a small band of additional data buffered along each block perimeter. We show that these perimeter

bands are sufficient to accumulate the effects of the successive filters. A remarkable result is that the

image data is read only twice and written just once, independent of image size, and thus total memory

bandwidth is reduced even compared to the traditional serial algorithm. We demonstrate significant

speedups in GPU computation.

For completeness we could have mentioned

integral images,

a term used in computer vision [Viola and Jones 2001]

to also refer to

summed-area tables [Crow 1984].

|

|

Image-space bidirectional scene reprojection.

ACM Trans. Graphics (SIGGRAPH Asia), 30(6), 2011.

Real-time temporal upsampling through image-based reprojection of adjacent frames.

We introduce a method for increasing the framerate of real-time rendering applications. Whereas many

existing temporal upsampling strategies only reuse information from previous frames, our bidirectional

technique reconstructs intermediate frames from a pair of consecutive rendered frames. This significantly

improves the accuracy and efficiency of data reuse since very few pixels are simultaneously occluded in

both frames. We present two versions of this basic algorithm. The first is appropriate for fill-bound

scenes as it limits the number of expensive shading calculations, but involves rasterization of scene

geometry at each intermediate frame. The second version, our more significant contribution, reduces both

shading and geometry computations by performing reprojection using only image-based buffers. It warps and

combines the adjacent rendered frames using an efficient iterative search on their stored scene depth and

flow. Bidirectional reprojection introduces a small amount of lag. We perform a user study to investigate

this lag, and find that its effect is minor. We demonstrate substantial performance improvements (3-4X)

for a variety of applications, including vertex-bound and fill-bound scenes, multi-pass effects, and motion

blur.

One nice contribution is the idea of using an iterative implicit solver to locate the source pixel

given a velocity field.

In the more recent project

Automating image morphing

using structural similarity on a halfway domain,

we adapt this idea to evaluate an image morph directly in a pixel shader,

without requiring any geometric tessellation, i.e. using only “gather” operations.

|

|

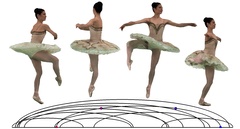

Real-time classification of dance gestures from skeleton animation.

Symposium on Computer Animation 2011. (Honorable Mention.)

Recognition of Kinect motions using robust, low-dimensional feature vectors.

We present a real-time gesture classification system for skeletal wireframe motion. Its key components

include an angular representation of the skeleton designed for recognition robustness under noisy input, a

cascaded correlation-based classifier for multivariate time-series data, and a distance metric based on

dynamic time-warping to evaluate the difference in motion between an acquired gesture and an oracle for the

matching gesture. While the first and last tools are generic in nature and could be applied to any

gesture-matching scenario, the classifier is conceived based on the assumption that the input motion

adheres to a known, canonical time-base: a musical beat. On a benchmark comprising 28 gesture classes,

hundreds of gesture instances recorded using the XBOX Kinect platform and performed by dozens of subjects

for each gesture class, our classifier has an average accuracy of 96.9%, for approximately 4-second

skeletal motion recordings. This accuracy is remarkable given the input noise from the real-time depth

sensor.

This technology was adapted in the

Kinect Star Wars video game.

|

|

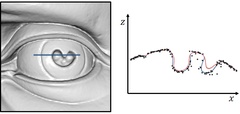

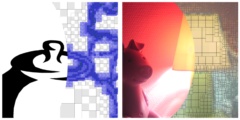

Antialiasing recovery.

ACM Trans. Graphics, 30(3), 2011. (Presented at SIGGRAPH 2011.)

Fast removal of jaggies introduced by many nonlinear image processing operations.

We present a method for restoring antialiased edges that are damaged by certain types of nonlinear image

filters. This problem arises with many common operations such as intensity thresholding, tone mapping,

gamma correction, histogram equalization, bilateral filters, unsharp masking, and certain

non-photorealistic filters. We present a simple algorithm that selectively adjusts the local gradients in

affected regions of the filtered image so that they are consistent with those in the original image. Our

algorithm is highly parallel and is therefore easily implemented on a GPU. Our prototype system can process

up to 500 megapixels per second and we present results for a number of different image filters.

Thanks to Mark Finch

for writing the Direct2D Custom Image Effect to demonstrate this technique!

|

|

Optimizing continuity in multiscale imagery.

ACM Trans. Graphics (SIGGRAPH Asia), 29(6), 2010.

Visually continuous mipmap pyramid spanning differing coarse- and fine-scale images.

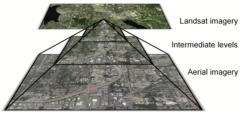

Multiscale imagery often combines several sources with differing appearance. For instance, Internet-based

maps contain satellite and aerial photography. Zooming within these maps may reveal jarring transitions.

We present a scheme that creates a visually smooth mipmap pyramid from stitched imagery at several scales.

The scheme involves two new techniques. The first, structure transfer, is a nonlinear operator that

combines the detail of one image with the local appearance of another. We use this operator to inject

detail from the fine image into the coarse one while retaining color consistency. The improved structural

similarity greatly reduces inter-level ghosting artifacts. The second, clipped Laplacian blending, is an

efficient construction to minimize blur when creating intermediate levels. It considers the sum of all

inter-level image differences within the pyramid. We demonstrate continuous zooming of map imagery from

space to ground level.

No hindsights yet.

|

|

Metric-aware processing of spherical imagery.

ACM Trans. Graphics (SIGGRAPH Asia), 29(6), 2010.

Adaptively discretized equirectangular map for accurate spherical processing.

Processing spherical images is challenging. Because no spherical parameterization is globally uniform, an

accurate solver must account for the spatially varying metric. We present the first efficient metric-aware

solver for Laplacian processing of spherical data. Our approach builds on the commonly used

equirectangular parameterization, which provides differentiability, axial symmetry, and grid sampling.

Crucially, axial symmetry lets us discretize the Laplacian operator just once per grid row. One difficulty

is that anisotropy near the poles leads to a poorly conditioned system. Our solution is to construct an

adapted hierarchy of finite elements, adjusted at the poles to maintain derivative continuity, and

selectively coarsened to bound element anisotropy. The resulting elements are nested both within and

across resolution levels. A streaming multigrid solver over this hierarchy achieves excellent convergence

rate and scales to huge images. We demonstrate applications in reaction-diffusion texture synthesis and

panorama stitching and sharpening.

No hindsights yet.

|

|

Seamless montage for texturing models.

Computer Graphics Forum (Eurographics), 29(2), 479-486, 2010.

Optimized alignment and merging of photographs for texturing approximate geometry.

We present an automatic method to recover high-resolution texture over an object by mapping detailed

photographs onto its surface. Such high-resolution detail often reveals inaccuracies in geometry and

registration, as well as lighting variations and surface reflections. Simple image projection results in

visible seams on the surface. We minimize such seams using a global optimization that assigns compatible

texture to adjacent triangles. The key idea is to search not only combinatorially over the source images,

but also over a set of local image transformations that compensate for geometric misalignment. This broad

search space is traversed using a discrete labeling algorithm, aided by a coarse-to-fine strategy. Our

approach significantly improves resilience to acquisition errors, thereby allowing simple and easy creation

of textured models for use in computer graphics.

No hindsights yet.

|

|

Distributed gradient-domain processing of planar and spherical images.

ACM Trans. Graphics, 29(2), 14, 2010. (Presented at SIGGRAPH 2010.)

Spherical gradient-domain processing on a Terapixel sky.

Gradient-domain processing is widely used to edit and combine images. In this paper we extend the

framework in two directions. First, we adapt the gradient-domain approach to operate on a spherical

domain, to enable operations such as seamless stitching, dynamic-range compression, and gradient-based

sharpening over spherical imagery. An efficient streaming computation is obtained using a new spherical

parameterization with bounded distortion and localized boundary constraints. Second, we design a

distributed solver to efficiently process large planar or spherical images. The solver partitions images

into bands, streams through these bands in parallel within a networked cluster, and schedules computation

to hide the necessary synchronization latency. We demonstrate our contributions on several datasets

including the Digitized Sky Survey, a terapixel spherical scan of the night sky.

See the resulting seamless terapixel night sky in WorldWide Telescope. In this project, the image data had to be parameterized using the “TOAST” spherical map. As explained in the paper, the resulting gradient-domain solution was therefore inexact. Our subsequent paper Metric-aware processing of spherical imagery shows that when working with the (commonly used) equirectangular parameterization, one can adaptively discretize the domain to achieve an efficient, exact solution over the sphere. |

|

Amortized supersampling.

ACM Trans. Graphics (SIGGRAPH Asia), 28(5), 2009.

Adaptive reuse of pixels from previous frames for high-quality antialiasing.

We present a real-time rendering scheme that reuses shading samples from earlier time frames to achieve

practical antialiasing of procedural shaders. Using a reprojection strategy, we maintain several sets of

shading estimates at subpixel precision, and incrementally update these such that for most pixels only one

new shaded sample is evaluated per frame. The key difficulty is to prevent accumulated blurring during

successive reprojections. We present a theoretical analysis of the blur introduced by reprojection

methods. Based on this analysis, we introduce a nonuniform spatial filter, an adaptive recursive temporal

filter, and a principled scheme for locally estimating the spatial blur. Our scheme is appropriate for

antialiasing shading attributes that vary slowly over time. It works in a single rendering pass on

commodity graphics hardware, and offers results that surpass 4x4 stratified supersampling in quality, at a

fraction of the cost.

Some related work is being pursued in the CryEngine 3 game platform,

as described by Anton Kaplanyan in a

SIGGRAPH

2010 course.

It also seems related to the temporal reprojection in

NVIDIA TXAA,

although there are few details on its technology.

|

|

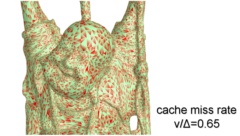

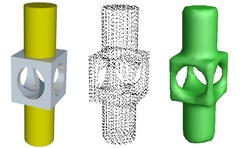

Parallel Poisson surface reconstruction.

International Symposium on Visual Computing 2009.

Parallelization of Poisson reconstruction using domain decomposition.

In this work we describe a parallel implementation of the Poisson Surface Reconstruction algorithm based on

multigrid domain decomposition. We compare implementations using different models of data-sharing between

processors and show that a parallel implementation with distributed memory provides the best scalability.

Using our method, we are able to parallelize the reconstruction of models from one billion data points on

twelve processors across three machines, providing a ninefold speedup in running time without sacrificing

reconstruction accuracy.

See also the original

Poisson surface reconstruction

paper.

|

|

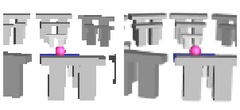

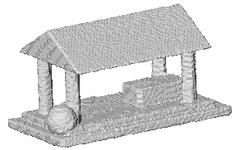

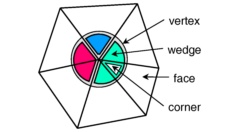

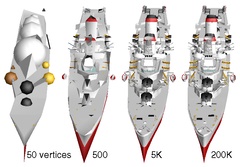

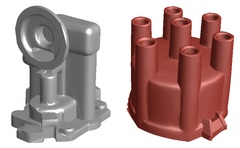

Parallel view-dependent level-of-detail control.

IEEE Trans. Vis. Comput. Graphics, 16(5), 2010.

Extended journal version with applications.

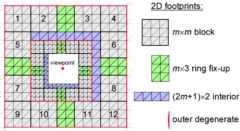

We present a scheme for view-dependent level-of-detail control that is implemented entirely on programmable

graphics hardware. Our scheme selectively refines and coarsens an arbitrary triangle mesh at the

granularity of individual vertices to create meshes that are highly adapted to dynamic view parameters.

Such fine-grain control has previously been demonstrated using sequential CPU algorithms. However, these

algorithms involve pointer-based structures with intricate dependencies that cannot be handled efficiently

within the restricted framework of GPU parallelism.We show that by introducing new data structures and

dependency rules, one can realize fine-grain progressive mesh updates as a sequence of parallel streaming

passes over the mesh elements. A major design challenge is that the GPU processes stream elements in

isolation. The mesh update algorithm has time complexity proportional to the selectively refined mesh, and

moreover can be amortized across several frames. The result is a single standard index buffer than can be

used directly for rendering. The static data structure is remarkably compact, requiring only 57% more

memory than an indexed triangle list. We demonstrate real-time exploration of complex models with normals

and textures, as well as shadowing and semitransparent surface rendering applications that make direct use

of the resulting dynamic index buffer.

This expanded version of the

conference paper

includes new diagrams and demonstrates some more rendering applications.

|

|

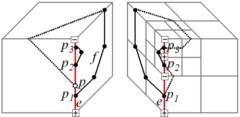

Parallel view-dependent refinement of progressive meshes.

Symposium on Interactive 3D Graphics and Games (I3D) 2009, 169-176.

Selective refinement of irregular mesh hierarchy using GPU streaming passes.

We present a scheme for view-dependent level-of-detail control that is implemented entirely on programmable

graphics hardware. Our scheme selectively refines and coarsens an arbitrary triangle mesh at the

granularity of individual vertices, to create meshes that are highly adapted to dynamic view parameters.

Such fine-grain control has previously been demonstrated using sequential CPU algorithms. However, these

algorithms involve pointer-based structures with intricate dependencies that cannot be handled efficiently

within the restricted framework of GPU parallelism. We show that by introducing new data structures and

dependency rules, one can realize fine-grain progressive mesh updates as a sequence of parallel streaming

passes over the mesh elements. A major design challenge is that the GPU processes stream elements in

isolation. The mesh update algorithm has time complexity proportional to the selectively refined mesh, and

moreover can be amortized across several frames. The static data structure is remarkably compact,

requiring only 57% more memory than an indexed triangle list. We demonstrate real-time exploration of

complex models with normals and textures.

We were invited to write an expanded version

of this conference paper in the IEEE Trans. Vis. Comput. Graphics journal.

|

|

Efficient traversal of mesh edges using adjacency primitives.

ACM Trans. Graphics (SIGGRAPH Asia), 27(5), 2008.

Fast rendering of shadow volumes, silhouettes, and motion blur.

Processing of mesh edges lies at the core of many advanced real-time rendering techniques, ranging from

shadow and silhouette computations, to motion blur and fur rendering. We present a scheme for efficient

traversal of mesh edges that builds on the adjacency primitives and programmable geometry shaders

introduced in recent graphics hardware. Our scheme aims to minimize the number of primitives while

maximizing SIMD parallelism. These objectives reduce to a set of discrete optimization problems on the

dual graph of the mesh, and we develop practical solutions to these graph problems. In addition, we extend

two existing vertex cache optimization algorithms to produce cache-efficient traversal orderings for

adjacency primitives. We demonstrate significant runtime speedups for several practical real-time

rendering algorithms.

It would be nice to extend this approach to the larger patch primitives

introduced in the

DirectX 11 hull shader.

|

|

Random-access rendering of general vector graphics.

ACM Trans. Graphics (SIGGRAPH Asia), 27(5), 2008.

GPU rendering of vector art over surfaces using cell-specialized descriptions.

We introduce a novel representation for random-access rendering of antialiased vector graphics on the GPU,

along with efficient encoding and rendering algorithms. The representation supports a broad class of

vector primitives, including multiple layers of semitransparent filled and stroked shapes, with quadratic

outlines and color gradients. Our approach is to create a coarse lattice in which each cell contains a

variable-length encoding of the graphics primitives it overlaps. These cell-specialized encodings are

interpreted at runtime within a pixel shader. Advantages include localized memory access and the ability

to map vector graphics onto arbitrary surfaces, or under arbitrary deformations. Most importantly, we

perform both prefiltering and supersampling within a single pixel shader invocation, achieving

inter-primitive antialiasing at no added memory bandwidth cost. We present an efficient encoding

algorithm, and demonstrate high-quality real-time rendering of complex, real-world examples.

One natural next step is to perform real-time conversion from the vector graphics primitives to the cell-specialized representation. This might be feasible with the increasing parallelism of the CPU. Also, it would be great to revisit the antialiasing at shape corners and for thin shapes, to further improve prefiltering for small primitives like fonts. Our earlier version of this paper, Texel programs for random-access antialiased vector graphics (MSR-TR-2007-95), also describes how a perfect hash data structure can be adapted as an alternative to the 2D indirection table. |

|

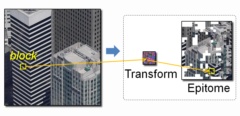

Factoring repeated content within and among images.

ACM Trans. Graphics (SIGGRAPH), 27(3), 2008.

Megatexture encoded by indirecting into an optimized epitome image.

We reduce transmission bandwidth and memory space for images by factoring their repeated content. A

transform map and a condensed epitome are created such that all image blocks can be reconstructed from

transformed epitome patches. The transforms may include affine deformation and color scaling to account

for perspective and tonal variations across the image. The factored representation allows efficient

random-access through a simple indirection, and can therefore be used for real-time texture mapping without

expansion in memory. Our scheme is orthogonal to traditional image compression, in the sense that the

epitome is amenable to further compression such as DXT. Moreover it allows a new mode of progressivity,

whereby generic features appear before unique detail. Factoring is also effective across a collection of

images, particularly in the context of image-based rendering. Eliminating redundant content lets us

include textures that are several times as large in the same memory space.

No hindsights yet.

|

|

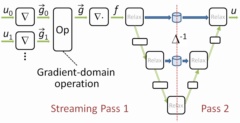

Streaming multigrid for gradient-domain operations on large images.

ACM Trans. Graphics (SIGGRAPH), 27(3), 2008.

Perform k multigrid V-cycles in just k-1 streaming passes over the

data.

We introduce a new tool to solve the large linear systems arising from gradient-domain image processing.

Specifically, we develop a streaming multigrid solver, which needs just two sequential passes over

out-of-core data. This fast solution is enabled by a combination of three techniques: (1) use of

second-order finite elements (rather than traditional finite differences) to reach sufficient accuracy in a

single V-cycle, (2) temporally blocked relaxation, and (3) multi-level streaming to pipeline the

restriction and prolongation phases into single streaming passes. A key contribution is the extension of

the B-spline finite-element method to be compatible with the forward-difference gradient representation

commonly used with images. Our streaming solver is also efficient for in-memory images, due to its fast

convergence and excellent cache behavior. Remarkably, it can outperform spatially adaptive solvers that

exploit application-specific knowledge. We demonstrate seamless stitching and tone-mapping of gigapixel

images in about an hour on a notebook PC.

In later work, we

distributed

the solver computation over a cluster to handle terapixel images,

and generalized the approach to operate over

spherical imagery.

|

|

Multi-view stereo for community photo collections.

IEEE International Conference on Computer Vision (ICCV) 2007.

Detailed 3D models reconstructed from crawled Internet images.

We present a multi-view stereo algorithm that addresses the extreme changes in lighting, scale, clutter,

and other effects in large online community photo collections. Our idea is to intelligently choose images

to match, both at a per-view and per-pixel level. We show that such adaptive view selection enables robust

performance even with dramatic appearance variability. The stereo matching technique takes as input sparse

3D points reconstructed from structure-from-motion methods and iteratively grows surfaces from these

points. Optimizing for surface normals within a photoconsistency measure significantly improves the

matching results. While the focus of our approach is to estimate high-quality depth maps, we also show

examples of merging the resulting depth maps into compelling scene reconstructions. We demonstrate our

algorithm on standard multi-view stereo datasets and on casually acquired photo collections of famous

scenes gathered from the Internet.

One application may be to provide useful 3D impostors to improve view interpolation for image-based

rendering systems like Photosynth.

|

|

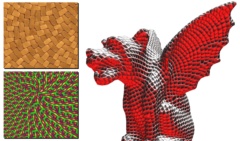

Design of tangent vector fields.

ACM Trans. Graphics (SIGGRAPH), 26(3), 2007.

Interactive control of direction fields for real-time surface texture synthesis.

Tangent vector fields are an essential ingredient in controlling surface appearance for applications

ranging from anisotropic shading to texture synthesis and non-photorealistic rendering. To achieve a

desired effect one is typically interested in smoothly varying fields that satisfy a sparse set of

user-provided constraints. Using tools from Discrete Exterior Calculus, we present a simple and efficient

algorithm for designing such fields over arbitrary triangle meshes. By representing the field as scalars

over mesh edges (i.e. discrete 1-forms), we obtain an intrinsic, coordinate-free formulation in which

field smoothness is enforced through discrete Laplace operators. Unlike previous methods, such a

formulation leads to a linear system whose sparsity permits efficient pre-factorization. Constraints are

incorporated through weighted least squares and can be updated rapidly enough to enable interactive design,

as we demonstrate in the context of anisotropic texture synthesis.

One generalization is N-symmetry fields as in Palacios and Zhang 2007 and Ray et al 2008. A common drawback in most linear approaches (including ours) is that the objective functional under-penalizes smoothness of the vector field in regions where the vectors have small magnitude. The work of Crane et al. 2010 overcomes this by optimizing over direction angles instead of vectors. |

|

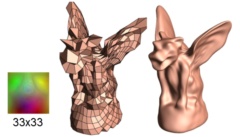

Compressed random-access trees for spatially coherent data.

Symposium on Rendering 2007.

Efficient representation of coherent image data such as lightmaps and alpha mattes.

Adaptive multiresolution hierarchies are highly efficient at representing spatially coherent graphics data.

We introduce a framework for compressing such adaptive hierarchies using a compact randomly-accessible tree

structure. Prior schemes have explored compressed trees, but nearly all involve entropy coding of a

sequential traversal, thus preventing fine-grain random queries required by rendering algorithms. Instead,

we use fixed-rate encoding for both the tree topology and its data. Key elements include the replacement

of pointers by local offsets, a forested mipmap structure, vector quantization of inter-level residuals,

and efficient coding of partially defined data. Both the offsets and codebook indices are stored as byte

records for easy parsing by either CPU or GPU shaders. We show that continuous mipmapping over an adaptive

tree is more efficient using primal subdivision than traditional dual subdivision. Finally, we demonstrate

efficient compression of many data types including light maps, alpha mattes, distance fields, and HDR

images.

The compressed tree structure can also be used for lossless compression,

by replacing vector quantization with simple storage of the residual vectors.

|

|

Unconstrained isosurface extraction on arbitrary octrees.

Symposium on Geometry Processing 2007.

Highly adaptable watertight surface from an unconstrained octree.

This paper presents a novel algorithm for generating a watertight level-set from an octree. We show that

the level-set can be efficiently extracted regardless of the topology of the octree or the values assigned

to the vertices. The key idea behind our approach is the definition of a set of binary edge-trees derived

from the octree's topology. We show that the edge-trees can be used define the positions of the

isovalue-crossings in a consistent fashion and to resolve inconsistencies that may arise when a single edge

has multiple isovalue-crossings. Using the edge-trees, we show that a provably watertight mesh can be

extracted from the octree without necessitating the refinement of nodes or modification of their values.

No hindsights yet.

|

|

Multi-level streaming for out-of-core surface reconstruction.

Symposium on Geometry Processing 2007.

Out-of-core solution of huge Poisson system to reconstruct 3D scans.

Reconstruction of surfaces from huge collections of scanned points often requires out-of-core techniques,

and most such techniques involve local computations that are not resilient to data errors. We show that a

Poisson-based reconstruction scheme, which considers all points in a global analysis, can be performed

efficiently in limited memory using a streaming framework. Specifically, we introduce a multilevel

streaming representation, which enables efficient traversal of a sparse octree by concurrently advancing

through multiple streams, one per octree level. Remarkably, for our reconstruction application, a

sufficiently accurate solution to the global linear system is obtained using a single iteration of cascadic

multigrid, which can be evaluated within a single multi-stream pass. We demonstrate scalable performance

on several large datasets.

The streaming algorithm in this paper implements a prolongation-only (i.e. cascadic) multigrid solver over

an adaptive 3D octree.

In our ISVC 2009 paper,

we apply domain decomposition to parallelize this cascadic multigrid computation.

Our SIGGRAPH 2008 paper

achieves a full V-cycle multigrid as a streaming algorithm,

although over a regular grid rather than an adaptive quadtree.

|

|

Poisson surface reconstruction.

Symposium on Geometry Processing 2006, 61-70.

(SGP 2021 Test of Time Award.) Reconstruction that considers all points at once for resilience to data noise.

We show that surface reconstruction from oriented points can be cast as a spatial Poisson problem. This

Poisson formulation considers all the points at once, without resorting to heuristic spatial partitioning

or blending, and is therefore highly resilient to data noise. Unlike radial basis function schemes, our

Poisson approach allows a hierarchy of locally supported basis functions, and therefore the solution

reduces to a well conditioned sparse linear system. We describe a spatially adaptive multiscale algorithm

whose time and space complexities are proportional to the size of the reconstructed model. Experimenting

with publicly available scan data, we demonstrate reconstruction of surfaces with greater detail than

previously achievable.

One perceived weakness of this work is that it requires oriented normals at the input points. However, most scanning technologies provide this information, either from line-of-sight knowledge or from adjacent nearby points in a range image. Experiments in [Kazhdan 2005] show that the approach is quite resilient to inaccuracies in the directions of the normals. In our subsequent SGP 2007 paper, we show that the Poisson problem can be solved out-of-core for large models by introducing a multilevel streaming representation of the sparse octree. Our 2013 paper extends the approach for improved geometric fidelity and using a faster hierarchical solver. The algorithm has been incorporated into MeshLab and VTK, and adapted over tetrahedral grids in CGAL. In 2011, Michael Kazhdan and Matthew Bolitho received the inaugural SGP Software Award for releasing the library code associated with this work. And ten years later at SGP 2021, the paper received the inaugural Test of Time Award. |

|

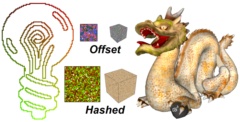

Perfect spatial hashing.

ACM Trans. Graphics (SIGGRAPH), 25(3), 2006.

Sparse spatial data packed into a dense table using a simple collision-free map.

We explore using hashing to pack sparse data into a compact table while retaining efficient random access.

Specifically, we design a perfect multidimensional hash function — one that is precomputed on static

data to have no hash collisions. Because our hash function makes a single reference to a small offset

table, queries always involve exactly two memory accesses and are thus ideally suited for parallel SIMD

evaluation on graphics hardware. Whereas prior hashing work strives for pseudorandom mappings, we instead

design the hash function to preserve spatial coherence and thereby improve runtime locality of reference.

We demonstrate numerous graphics applications including vector images, texture sprites, alpha channel

compression, 3D-parameterized textures, 3D painting, simulation, and collision detection.

The perfect hash data structure can be generalized to represent variable-sized data records as described in

Texel programs for random-access antialiased

vector graphics (MSR-TR-2007-95).

Alcantara et al. [2009] explore

parallel construction

of perfect spatial hashes.

|

|

Appearance-space texture synthesis.

ACM Trans. Graphics (SIGGRAPH), 25(3), 2006.

Improved synthesis quality and efficiency by pre-transforming the exemplar.

The traditional approach in texture synthesis is to compare color neighborhoods with those of an exemplar.

We show that quality is greatly improved if pointwise colors are replaced by appearance vectors that

incorporate nonlocal information such as feature and radiance-transfer data. We perform dimensionality

reduction on these vectors prior to synthesis, to create a new appearance-space exemplar. Unlike a texton

space, our appearance space is low-dimensional and Euclidean. Synthesis in this information-rich space

lets us reduce runtime neighborhood vectors from 5x5 grids to just 4 locations. Building on this unifying

framework, we introduce novel techniques for coherent anisometric synthesis, surface texture synthesis

directly in an ordinary atlas, and texture advection. Remarkably, we achieve all these functionalities in

real-time, or 3 to 4 orders of magnitude faster than prior work.

The main contribution of this paper is sometimes misinterpreted:

it is not the use of PCA projection to accelerate texture neighborhood comparisons (which is

already done in [Hertzmann et al 2001; Liang et al 2001; Lefebvre and Hoppe 2005]);

rather it is the transformation of the exemplar texture itself (into a new appearance space) to let each

transformed pixel encode nonlocal information.

See Design of tangent vector fields for interactive painting of oriented texture over a surface. |

|

Parallel controllable texture synthesis.

ACM Trans. Graphics (SIGGRAPH), 24(3), 2005.

Parallel synthesis of infinite deterministic content, with intuitive user controls.

We present a texture synthesis scheme based on neighborhood matching, with contributions in two areas:

parallelism and control. Our scheme defines an infinite, deterministic, aperiodic texture, from which

windows can be computed in real-time on a GPU. We attain high-quality synthesis using a new analysis

structure called the Gaussian stack, together with a coordinate upsampling step and a subpass correction

approach. Texture variation is achieved by multiresolution jittering of exemplar coordinates. Combined

with the local support of parallel synthesis, the jitter enables intuitive user controls including

multiscale randomness, spatial modulation over both exemplar and output, feature drag-and-drop, and

periodicity constraints. We also introduce synthesis magnification, a fast method for amplifying coarse

synthesis results to higher resolution.

We enhance the quality, efficiency, and functionality of the technique in our subsequent

SIGGRAPH 2006 paper.

Correction using subpasses is reminiscent of k-color Gauss-Seidel updates.

A similar subpass strategy is also adapted in Li-Yi Wei's

Parallel Poisson disk sampling.

|

|

Fast exact and approximate geodesics on meshes.

ACM Trans. Graphics (SIGGRAPH), 24(3), 2005.

Efficient computation of shortest paths and distances on triangle meshes.

The computation of geodesic paths and distances on triangle meshes is a common operation in many computer

graphics applications. We present several practical algorithms for computing such geodesics from a source

point to one or all other points efficiently. First, we describe an implementation of the exact "single

source, all destination" algorithm presented by Mitchell, Mount, and Papadimitriou (MMP). We show that the

algorithm runs much faster in practice than suggested by worst case analysis. Next, we extend the

algorithm with a merging operation to obtain computationally efficient and accurate approximations with

bounded error. Finally, to compute the shortest path between two given points, we use a lower-bound

property of our approximate geodesic algorithm to efficiently prune the frontier of the MMP algorithm,

thereby obtaining an exact solution even more quickly.

This computation of geodesic distances is used to improve the texture atlas parameterizations produced by

the DirectX UVAtlas tool.

See Bommes and Kobbelt

2007 for an interesting extension to compute geodesics from more general (non-point) sources.

|

|

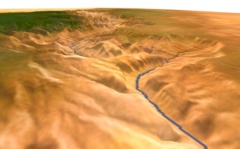

Terrain rendering using GPU-based geometry clipmaps.

GPU Gems 2, M. Pharr and R. Fernando, eds., Addison-Wesley, March 2005.

Real-time terrain rendering with all data processing on the GPU.

The geometry clipmap introduced in Losasso and Hoppe 2004 is a new level-of-detail structure for rendering

terrains. It caches terrain geometry in a set of nested regular grids, which are incrementally shifted as

the viewer moves. The grid structure provides a number of benefits over previous irregular-mesh

techniques: simplicity of data structures, smooth visual transitions, steady rendering rate, graceful

degradation, efficient compression, and runtime detail synthesis. In this chapter, we describe a GPU-based

implementation of geometry clipmaps, enabled by vertex textures. By processing terrain geometry as a set

of images, we can perform nearly all computations on the GPU itself, thereby reducing CPU load. The

technique is easy to implement, and allows interactive flight over a 20-billion-sample grid of the United

States stored in just 355 MB of memory, at around 90 frames per second.

Unified shader cores in the latest graphics cards (NVIDIA GeForce 8800 and AMD Radeon HD 2900) provide

fast texture sampling in vertex shaders, making this implementation of geometry clipmaps extremely

practical.

The PTC image compressor mentioned in the paper was the precursor to the JPEG XR standard, which is supported in the WIC interface of Windows. See also the original paper and the recent book 3D Engine Design for Virtual Globes [Cozzi and Ring 2011]. |

|

Geometry clipmaps: Terrain rendering using nested regular grids.

ACM Trans. Graphics (SIGGRAPH), 23(3), 2004.

New terrain data structure enabling real-time decompression and synthesis.

Rendering throughput has reached a level that enables a novel approach to level-of-detail (LOD) control in

terrain rendering. We introduce the geometry clipmap, which caches the terrain in a set of nested regular

grids centered about the viewer. The grids are stored as vertex buffers in fast video memory, and are

incrementally refilled as the viewpoint moves. This simple framework provides visual continuity, uniform

frame rate, complexity throttling, and graceful degradation. Moreover it allows two new exciting real-time

functionalities: decompression and synthesis. Our main dataset is a 40GB height map of the United States.

A compressed image pyramid reduces the size by a remarkable factor of 100, so that it fits entirely in

memory. This compressed data also contributes normal maps for shading. As the viewer approaches the

surface, we synthesize grid levels finer than the stored terrain using fractal noise displacement.

Decompression, synthesis, and normal-map computations are incremental, thereby allowing interactive flight

at 60 frames/sec.

In our more recent GPU Gems 2 chapter,

we store the terrain as a set of vertex textures, thereby transferring nearly all computation to the GPU.

(See the demo available there.)

The PTC image compressor mentioned in the paper was the precursor to the JPEG XR standard, which is supported in the WIC interface of Windows. |

|

Digital photography with flash and no-flash image pairs.

ACM Trans. Graphics (SIGGRAPH), 23(3), 2004.

Combining detail of a flash image with ambient lighting of a non-flash image.

Digital photography has made it possible to quickly and easily take a pair of images of low-light

environments: one with flash to capture detail and one without flash to capture ambient illumination. We

present a variety of applications that analyze and combine the strengths of such flash/no-flash image

pairs. Our applications include denoising and detail transfer (to merge the ambient qualities of the

no-flash image with the high-frequency flash detail), white-balancing (to change the color tone of the

ambient image), continuous flash (to interactively adjust flash intensity), and red-eye removal (to repair

artifacts in the flash image). We demonstrate how these applications can synthesize new images that are of

higher quality than either of the originals.

Equation (9) in the paper has a typo; it should be “Cp = Ap / Δp”. I really like the image deblurring work of Yuan et al 2007 which uses a blurred/noisy image pair. See also my earlier work on Continuous Flash (demo). |

|

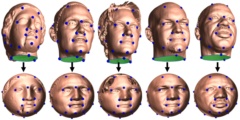

Inter-surface mapping.

ACM Trans. Graphics (SIGGRAPH), 23(3), 2004.

Automatic creation of low-distortion parametrizations between meshes.

We consider the problem of creating a map between two arbitrary triangle meshes. Whereas previous

approaches compose parametrizations over a simpler intermediate domain, we directly create and optimize a

continuous map between the meshes. Map distortion is measured with a new symmetric metric, and is

minimized during interleaved coarse-to-fine refinement of both meshes. By explicitly favoring low

inter-surface distortion, we obtain maps that naturally align corresponding shape elements. Typically, the

user need only specify a handful of feature correspondences for initial registration, and even these

constraints can be removed during optimization. Our method robustly satisfies hard constraints if desired.

Inter-surface mapping is shown using geometric and attribute morphs. Our general framework can also be

applied to parametrize surfaces onto simplicial domains, such as coarse meshes (for semi-regular

remeshing), and octahedron and toroidal domains (for geometry image remeshing). In these settings, we

obtain better parametrizations than with previous specialized techniques, thanks to our fine-grain

optimization.

No hindsights yet.

|

|

Removing excess topology from isosurfaces.

ACM Trans. Graphics, 23(2), April 2004, 190-208.

Repair of tiny topological handles in scanned surface models.

Many high-resolution surfaces are created through isosurface extraction from volumetric representations,

obtained by 3D photography, CT, or MRI. Noise inherent in the acquisition process can lead to geometrical

and topological errors. Reducing geometrical errors during reconstruction is well studied. However,

isosurfaces often contain many topological errors in the form of tiny handles. These nearly

invisible artifacts hinder subsequent operations like mesh simplification, remeshing, and parametrization.

In this article we present a practical method for removing handles in an isosurface. Our algorithm makes

an axis-aligned sweep through the volume to locate handles, compute their sizes, and selectively remove

them. The algorithm is designed to facilitate out-of-core execution. It finds the handles by

incrementally constructing and analyzing a Reeb graph. The size of a handle is measured by a short

nonseparating cycle. Handles are removed robustly by modifying the volume rather than attempting "mesh

surgery". Finally, the volumetric modifications are spatially localized to preserve geometrical detail.

We demonstrate topology simplification on several complex models, and show its benefits for subsequent

surface processing.